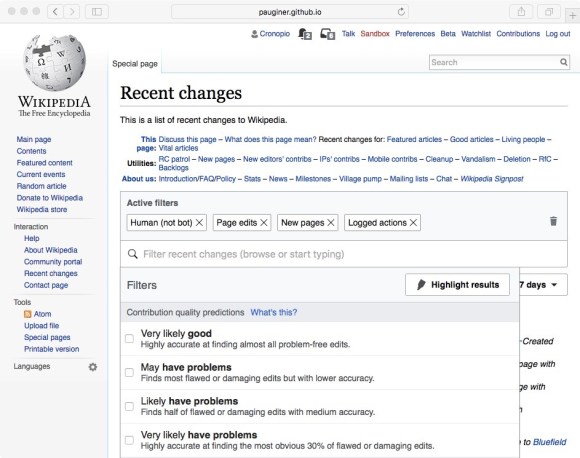

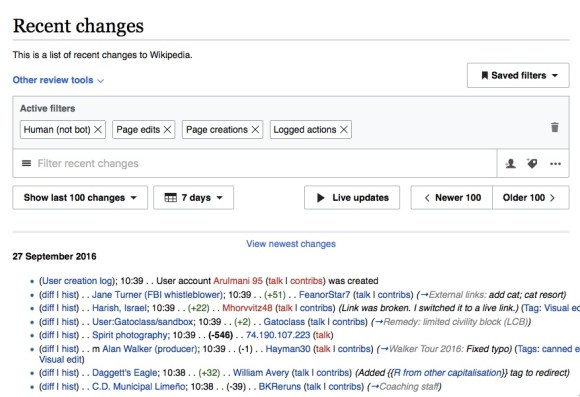

Recently, new filters for edit review transitioned out of beta and became the recent changes page default on a majority of Wikimedia wikis. The new filters, part of the edit review improvements project, comprise a suite of improvements that make edit reviewers on recent changes more effective and efficient. The project also begins a process of creating a gentler edit-review process for new contributors, and expands the scope of the Foundation’s work with machine learning. (See our previous coverage on this blog.)

This release follows a five-month trial as a beta feature. During this period, the team performed three rounds of progressive user research and gathered feedback on an ongoing basis from about 40,000 users who had the feature activated. After an iterative process of research, analysis, and feature tweaking, users can now access an entirely redesigned filtering interface along with additional filters and tools, including user-defined highlighting, the ability to save filter sets for re-use, and predictive filters powered by machine learning (on ORES-enabled wikis). Another upcoming feature is live update, which will let editors use recent changes for near-real-time monitoring; the demand for such a functionality surfaced in our exploratory research and a prototype users evaluated during usability testing was very well-received.

Making interface changes; why user research?

To make sweeping changes to the way users interact with the functionality on a frequently visited page, the team wanted to make sure the process was as inclusive as possible. At the onset, the goal was to consistently maintain focus on the users.

To make sure we were meeting our users’ needs and wants, we initiated multiple rounds of user testing in addition to keeping feedback channels open constantly since pushing new filters and functionalities to beta.

We decided to schedule a total of 19 in-depth user interviews (among the different user research methods available) over Google Hangouts and YouTube Live in order to have comparatively more focused time with the research participants and illuminate their existing workflows, pain points, and desired functionalities. The sessions also enabled us to evaluate how the participants interacted with the new filters and features, note any difficulties and potential areas of clarification and improvement. The remote-friendly element of using Hangouts/YouTube Live allowed us to interact with users all over the globe, and the ability to share screens got us as close to a contextual inquiry in an editor’s natural environment as we possibly could.

What did we discover?

Over the course of developing and iterating on the new filters and features, we had three main research questions:

- Did participants find utility in the new filters and functionalities for edit review on recent changes?

- To what extent did participants discover, understand, and intend to use the new filters/functionalities on recent changes?

- How did the success of the filters/functionalities translate to the watchlist page?

The three rounds of research sessions yielded overall positive feedback on the suite of changes on recent changes page. This positive feedback ranged from the very specific (excellent user understanding and response to the ‘live updates’ button) to the macro (participants felt that the ideas represented by the prototypes were improvements over the previous filtering capabilities, and many intended to utilize them in the course of the on-wiki workflows).

While discoverability, understanding, and intention to incorporate the new features varied over the three rounds of sessions, we were successful in evaluating users’ experiences and incorporating their direct feedback during the sessions to iterate and improve. To name a few, we tweaked the location of advanced filters, removed filter selection conflicts, and changed a couple different visual elements of the filter dropdown.

Finally, we came to the conclusion that having these same features on watchlist did not have enough measurable utility and enthusiasm from these particular participant cohorts. However, we did note that the research session participants were primarily recent changes users, and were not always heavy users of watchlist as well. Therefore, we aim to do additional investigation in the future with watchlist users, and will keep the features for watchlist in beta for now.

For more details, please visit the research page for new filters for edit review!

What’s next?

As the Global Collaboration team continues its work on edit review improvements and other projects, more input and feedback from you, our users, will be essential to both ideation and product development. If you’re like to learn more and get involved, please use the talk page on the Edit Review Improvements page or contact Daisy Chen directly.

Pau Giner, Senior User Experience Designer, Audiences Design

Daisy Chen, Associate Design Researcher, Audiences Design

Wikimedia Foundation

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation