Since we increased the upload filesize limit to 100MB on the main wikis a few months ago it’s been easier to upload large images and medium-size video clips, but there’s always something that’s just a leeeeetle over the limit… MediaWiki’s upload form does have an option for pulling a file from an external web site, which wouldn’t be restricted to the HTTP post limits in the Squid->Apache->PHP system.

We hadn’t been able to deploy it initially on Wikimedia sites because the web servers are walled off and don’t have direct access to the internet; further we were worried about safety given security reports about how the CURL library can follow malicious redirects to local filesystem resources.

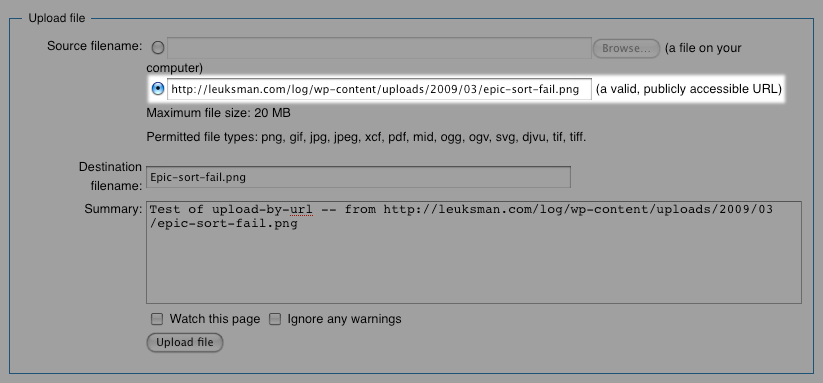

On investigation, Tim found that CURL is safe in the default case — you need to explicitly enable redirect following to be exploited, which we don’t. We also have an HTTP proxy which our internal servers can use to reach outside files… I’ve made some tweaks to Special:Upload to support the proxy setting, and it’s now enabled on test.wikipedia.org:

My very first URL-uploaded file was a screenshot from one of my blog posts, Spiffy!

The default configuration limits URL uploads to sysops, so for now you’ll need to be a sysop on Test Wikipedia to try it out. If everything seems fairly problem-free we’ll start rolling this out a bit more widely for Commons and other sites.

The upload-by-URL functionality is also needed for future-facing work Michael Dale is working on to allow an on-wiki media picker to fetch freely-licensed files from Flickr, Archive.org, and other places.

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation