Unlike many other websites on the internet, the Foundation runs its own servers and edge distribution to deliver our projects’ content to the world. We do this for several reasons, but key among them is our strong stance on privacy. By avoiding third-party services, we maintain the technical and policy control we need to implement the strict user data handling that we require. Privacy is essential to Wikimedia’s vision of empowering everyone to share in the sum of all human knowledge.

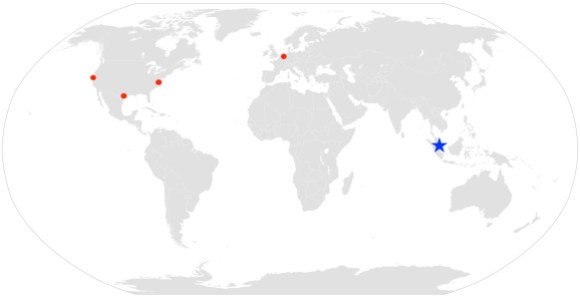

Prior to this launch, our existing server network offered (and still offers!) user-facing services from four locations. Three are in the United States, located in San Francisco, California; Carrollton, Texas; and Ashburn, Virginia, while the fourth is in the Netherlands. While our site locations are all well-connected, large portions of the world can face significant delays when accessing Wikimedia projects based solely on their distance from our sites. Jakarta is almost 14,000 kilometers from San Francisco. Bangalore is nearly 8,000 kilometers from the Netherlands. Though light travels incredibly quickly (300,000 kilometers every second), loading a web page requires many trips back and forth from the server to the client, and all of those round trips multiply the basic network delays in reaching our sites.

This new edge site in Singapore fills the large gap that existed between the Netherlands and San Francisco, bringing lower latency and a better user experience to the region.

Underlying map, public domain. Data center indicators added by the Wikimedia Foundation.

A quick digression to explain how these data centers matter to the users of the Wikimedia projects: whenever someone requests content from one of the sites that we operate, our DNS servers dynamically return one of several distinct IP addresses corresponding to our different edge sites, depending on the location of the user. The closer the edge site is to the user, the lower the latency will be for the secure connection from the user to Wikimedia, resulting in faster page load times.

The edge sites also host content caches which keep copies of our most popular URLs close to the user as well. Content cache misses at the edge sites must be serviced by other caches in the US, and misses there in turn must be serviced by our actual application layers and databases. Each step in this process is slower than the last, so we strive to have as much content as possible “hot” in the edge cache servers that users first access.

We began experimentally going live with user service from Singapore starting on March 22nd, and we’ve been slowly expanding into the region ever since as we tune up and validate the performance of the site. The process of optimizing our regional network peering and bringing up the last few countries will continue over the next few weeks to months, but at this point most of Asia and Oceania are being serviced by the Singapore site and it’s handling roughly 17% of our global traffic.

We’ve seen some significant improvements in page load times in the region. Our hope is that faster page load times will make it easier for users to access the content that the various communities are creating, and will make it easier for users in the region to contribute to the sum of all human knowledge from their own unique perspectives. In that context, the early performance results suggest that this has been a significant advance.

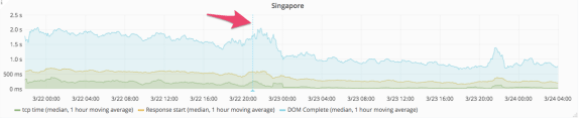

On March 22, we started directing traffic from Singapore itself to the new site. It took several hours for cache hit rates to increase, since much of the traffic being requested had never been delivered from that location before. Once it did, we found that the time required to deliver a page decreased by over 40 percent. Before the change, the median page load took between 1.5 seconds and 1.7 seconds. After the change, the median page load took between 0.8 and 0.95 seconds. (In the image below, the arrow indicates the point at which traffic was directed to the new data center.)

Of course, Singapore itself is the easiest possible case. Every customer is within just a few kilometers of the actual data center. We’ve been pleased, though, to see similar results in other countries. A few examples are below.

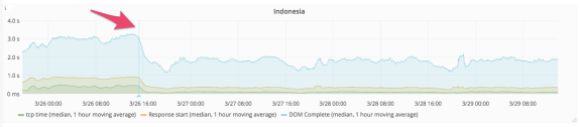

On March 26, we enabled traffic from Indonesia, and as a result we saw a decrease in page load times from around 3 seconds, to under 2 seconds.

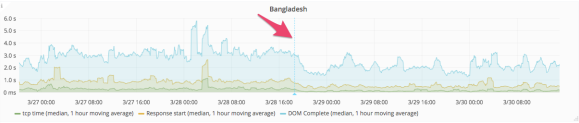

On March 28, we enabled traffic from Bangladesh, and as a result saw a decrease in median page load times from between 3.0 and 3.2 seconds, down to 1.8 to 2.1 seconds. (This graph is much spikier because traffic rates from Bangladesh are much lower, and as a result the set of performance samples is much smaller.)

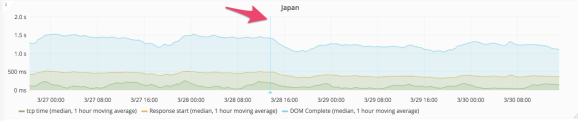

Finally, on March 28, we began to direct traffic from Japan to the Singapore data center. This is a particularly interesting case for a number of reasons: Japan is geographically closer to Singapore than it is to San Francisco, but not by much; Japan has very good trans-Pacific connectivity; and Japan is also one of the largest consumers of Wikimedia properties (currently, Japan is the third largest source of pageviews, behind the United States and Germany). Directing Japanese traffic to Singapore resulted in a decrease in the median page load time from around 1.4 seconds, to just under 1.2 seconds.

From the initial budgeting and vendor evaluations up through the final network engineering that’s still ongoing, our Site Reliability Engineering team has been turning this project into a reality for over a year now in conjunction with Legal, Purchasing, Performance, and many other teams across the Foundation. We’re learning from this experience and hoping to build on it to make future edge sites more-easily deployable in other underserved areas of the world in the coming years.

For more performance data, including information on how we measure the performance of Wikimedia properties and much more detail about the migration to the Singapore data center, please see the Performance Team’s blog.

P.S. We’re hiring!

Brandon Black, Engineering Manager, Traffic

Wikimedia Foundation

The four traffic screenshots in this post are from graphana.wikimedia.org.

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation