This Recent research column originally appeared in the March 2022 issue of the Signpost. It is republished from on-wiki, and by extension is dual-licensed under CC BY SA 4.0 and GFDL 1.3. The authors of this post are Bri, Gerald Waldo Luis and Tilman Bayer.

The first scholarly references on Wikipedia articles, and the editors who placed them there

Reviewed by Bri

The authors of the study “Dataset of first appearances of the scholarly bibliographic references on Wikipedia articles”[1] developed “a methodology to detect [when] the oldest scholarly reference [was] added” to 180,795 unique pages on English Wikipedia. The authors concluded the dataset can help investigate “how the scholarly references on Wikipedia grew and which editors added them”. The paper includes a list of the top English Wikipedia editors in a number of scientific research fields.

English Wikipedia lacking in open access references

Reviewed by Gerald Waldo Luis

A four-author study was published by the journal Insights on February 2, 2022 titled “Exploring open access coverage of Wikipedia-cited research across the White Rose Universities”.[2] As implied, it analyzes English Wikipedia references published by universities of the White Rose University Consortium—Leeds, Sheffield, and York—and examines why open access (OA) is an important feature for Wikipedians to use. It summarizes that the English Wikipedia is still lacking in OA references—that is, those from the consortium.

The study opens by stating that despite the open source nature of Wikipedia editing, there is no requirement to link to OA sites where possible. It then criticizes this lack of scrutiny, reasoning that it is contrary to Wikipedia’s goal of being an accessible portal to knowledge. Several following sections encapsulate the importance of Wikipedia among the research community, which makes OA crucial; this has been recognized by the World Health Organization when they announced they would make their COVID-19 content free to use for Wikipedia. Wikipedia has also proven to be a factor in increasing paper readerships.

Overall, 300 references were sampled for this study. The authors also added: “Of the 293 sample citations where an affiliation could be validated, 291 (99.3%) had been correctly attributed.” “In total,” the study summarizes, “there were 6,454 citations of the [consortium’s] research on the English Wikipedia in the period 1922 to April 2019.” It then presented tables breaking down these references to specific categories: Sheffield was cited the most (2,523), while York was the least (1,525). Biology-related articles cited the consortium the most (1,707), while art and writing articles cited them the least (7). As expected by the authors, journal articles—specifically from Sheffield—were cited the most (1,565). There is also a table breaking the references down by different OA licenses. York had the most OA sources cited on the English Wikipedia (56%). There are fewer sources that have non-commercial and non-derivative Creative Commons licenses. The study, however, disclaims that this is not a review of all English Wikipedia references.

In a penultimate “discussion” section, the study says that while there are many OA references, it is still “some way to go before all Wikipedia citations are fully available [in OA]”, with nearly half of the sampled references paywalled, thus stressing the need for more OA scholarly works. However, with Plan S, a recent OA-endorsing initiative, the study expressed optimism in this goal. It also proposes the solution of more edit-a-thons, which usually involve librarians and researchers who can help with this OA effort. The study notes that Leeds once held an edit-a-thon too. Its “conclusion” section states that “This [effort] can be achieved through greater awareness regarding Wikipedia’s function as an influential and popular platform for communicating science, [a] greater understanding […] as to the importance of citing OA works over [paywalled works].”

Briefly

- See the page of the monthly Wikimedia Research Showcase for videos and slides of past presentations.

Other recent publications

Other recent publications that could not be covered in time for this issue include the items listed below. Contributions, whether reviewing or summarizing newly published research, are always welcome. Compiled by Tilman Bayer

“Citation Needed: A Taxonomy and Algorithmic Assessment of Wikipedia’s Verifiability”

From the abstract:[3]

“In this paper, we aim to provide an empirical characterization of the reasons why and how Wikipedia cites external sources to comply with its own verifiability guidelines. First, we construct a taxonomy of reasons why inline citations are required, by collecting labeled data from editors of multiple Wikipedia language editions. We then crowdsource a large-scale dataset of Wikipedia sentences annotated with categories derived from this taxonomy. Finally, we design algorithmic models to determine if a statement requires a citation, and to predict the citation reason.”

“Psychology and Wikipedia: Measuring Psychology Journals’ Impact by Wikipedia Citations”

From the abstract:[4]

“We are presenting a rank of academic journals classified as pertaining to psychology, most cited on Wikipedia, as well as a rank of general-themed academic journals that were most frequently referenced in Wikipedia entries related to psychology. We then compare the list to journals that are considered most prestigious according to the SciMago journal rank score. Additionally, we describe the time trajectories of the knowledge transfer from the moment of the publication of an article to its citation in Wikipedia. We propose that the citation rate on Wikipedia, next to the traditional citation index, may be a good indicator of the work’s impact in the field of psychology.”

“Measuring University Impact: Wikipedia Approach”

From the abstract:[5]

“we discuss the new methodological technique that evaluates the impact of university based on popularity (number of page-views) of their alumni’s pages on Wikipedia. […] Preliminary analysis shows that the number of page-views is higher for the contemporary persons that prove the perspectives of this approach [sic]. Then, universities were ranked based on the methodology and compared to the famous international university rankings ARWU and QS based only on alumni scales: for the top 10 universities, there is an intersection of two universities (Columbia University, Stanford University).”

“Creating Biographical Networks from Chinese and English Wikipedia”

From the abstract and paper:[6]

“The ENP-China project employs Natural Language Processing methods to tap into sources of unprecedented scale with the goal to study the transformation of elites in Modern China (1830-1949). One of the subprojects is extracting various kinds of data from biographies and, for that, we created a large corpus of biographies automatically collected from the Chinese and English Wikipedia. The dataset contains 228,144 biographical articles from the offline Chinese Wikipedia copy and is supplemented with 110,713 English biographies that are linked to a Chinese page. We also enriched this bilingual corpus with metadata that records every mentioned person, organization, geopolitical entity and location per Wikipedia biography and links the names to their counterpart in the other language.” “By inspecting the [Chinese Wikipedia dump] XML files, we concluded that there was no metadata that identifies the biographies and, therefore, we had to rely on the unstructured textual data of the pages. […] we decided to rely on deep learning for text classification. […] The task is to assign a document to one or more predefined categories, in our case, “biography” or “non-biography.” […] For our extraction, we used one of the most widely used contextualized word representations to date, BERT, combined with the neural network’s architecture, BiLSTM. BiLSTM is state of the art for many NLP tasks, including text classification. In our case, we trained a model with examples of Chinese biographies and non-biographies so that it relies on specific semantic features of each type of entry in order to predict its category.”

See also an accompanying blog post.

Apparently the authors were unaware of Wikipedia categories such as zh:Category:人物 (or its English Wikipedia equivalent Category:People) which might have provided an useful additional feature for the machine learning task of distinguishing biographies and non-biographies. On the other hand, they made use of Wikidata to generate a training dataset of biographies and non-biographies.

“Learning to Predict the Departure Dynamics of Wikidata Editors”

From the abstract:[7]

“…we investigate the synergistic effect of two different types of features: statistical and pattern-based ones with DeepFM as our classification model which has not been explored in a similar context and problem for predicting whether a Wikidata editor will stay or leave the platform. Our experimental results show that using the two sets of features with DeepFM provides the best performance regarding AUROC (0.9561) and F1 score (0.8843), and achieves substantial improvement compared to using either of the sets of features and over a wide range of baselines”

“When Expertise Gone Missing: Uncovering the Loss of Prolific Contributors in Wikipedia”

From the abstract and paper (preprint version):[8]

“we have studied the ongoing crisis in which experienced and prolific editors withdraw. We performed extensive analysis of the editor activities and their language usage to identify features that can forecast prolific Wikipedians, who are at risk of ceasing voluntary services. To the best of our knowledge, this is the first work which proposes a scalable prediction pipeline, towards detecting the prolific Wikipedians, who might be at a risk of retiring from the platform and, thereby, can potentially enable moderators to launch appropriate incentive mechanisms to retain such `would-be missing’ valued Wikipedians.”

“We make the following novel contributions in this paper. – We curate a first ever dataset of missing editors, a comparable dataset of active editors along with all the associated metadata that can appropriately characterise the editors from each dataset.[…]

– First we put forward a number of features describing the editors (activity and behaviour) which portray significant differences between the active and the missing editors.[…]

– Next we use SOTA machine learning approaches to predict the currently prolific editors who are at the risk of leaving the platform in near future. Our best models achieve an overall accuracy of 82% in the prediction task. […]

An intriguing finding is that some very simple factors like how often an editor’s edits are reverted or how often an editor is assigned administrative tasks could be monitored by the moderators to determine whether an editor is about to leave the platform”

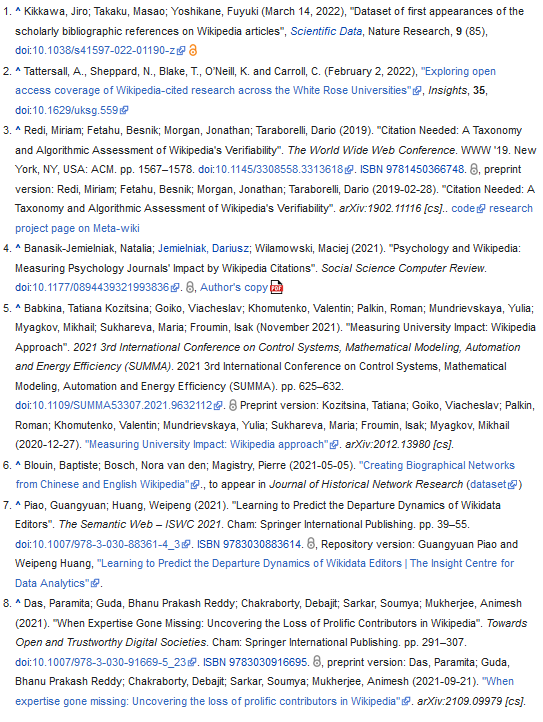

References

Due to software limitations, references are displayed here in image format. For the original list of references with clickable links, see the Signpost column.

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation