The Wikimedia Foundation is today launching a new global policy to better defend and protect younger readers and contributors on the Wikimedia projects. Our new Combating Online Child Exploitation policy clarifies how the Foundation’s Trust and Safety (T&S) and Legal teams will deal with sensitive content and harmful conduct on the projects.

The Wikimedia Foundation has always maintained a firm commitment to protecting children who use the Wikimedia projects, whether as readers or as volunteer contributors. As such, we have a zero-tolerance policy toward the exploitation of children online, be it psychologically, emotionally or sexually. This policy seeks to codify that further and clarify roles and responsibilities of child protection across all projects under the Wikimedia banner. Online child exploitation is a term used to describe a number of issues that negatively impact minors, including the manufacture and consumption of child sexual abuse material (CSAM) as well as harmful and illegal conduct relating to minors.

Key elements of the policy

The Trust and Safety (T&S) team at the Wikimedia Foundation works to identify, build, and support processes that help to keep readers and contributors safe on the Wikimedia projects. This can include in-depth investigations into reports of harassment, abuse, or legally-sensitive situations that cannot be handled through robust community-led processes.

Previous experiences have provided guideposts that helped to inform and develop the policy, and made immediately clear what should form its key elements. These are listed below.

- Establishing a focused definition of what constitutes CSAM on the projects. While usually obvious, some material that is kept on Wikimedia projects might be removed on other websites. Wikimedia’s goal of ensuring free and open knowledge is available to everyone, everywhere, requires us to consider specific cases where content with demonstrable cultural or historic significance may be kept. A particularly well-known example is the Pulitzer Prize-winning photograph “The Terror of War,” which prominently features a nude child.

- Clarifying what constitutes content that promotes, supports or encourages child exploitation. The Wikimedia community maintains articles that discuss the phenomenon of child exploitation in an educational context, as they do with millions of other subject areas. This policy seeks to clarify when that becomes problematic, and where the T&S team may be able to step in with Office-level actions.

- Providing clear information and a dedicated channel through which to report potential exploitation to the Foundation. It is crucial to make information readily available to readers and the volunteer communities on how to report content to the Foundation that they are concerned is potentially exploitative. We maintain a standalone email inbox where links to material in violation of this policy may be sent for evaluation by the Legal and T&S teams.

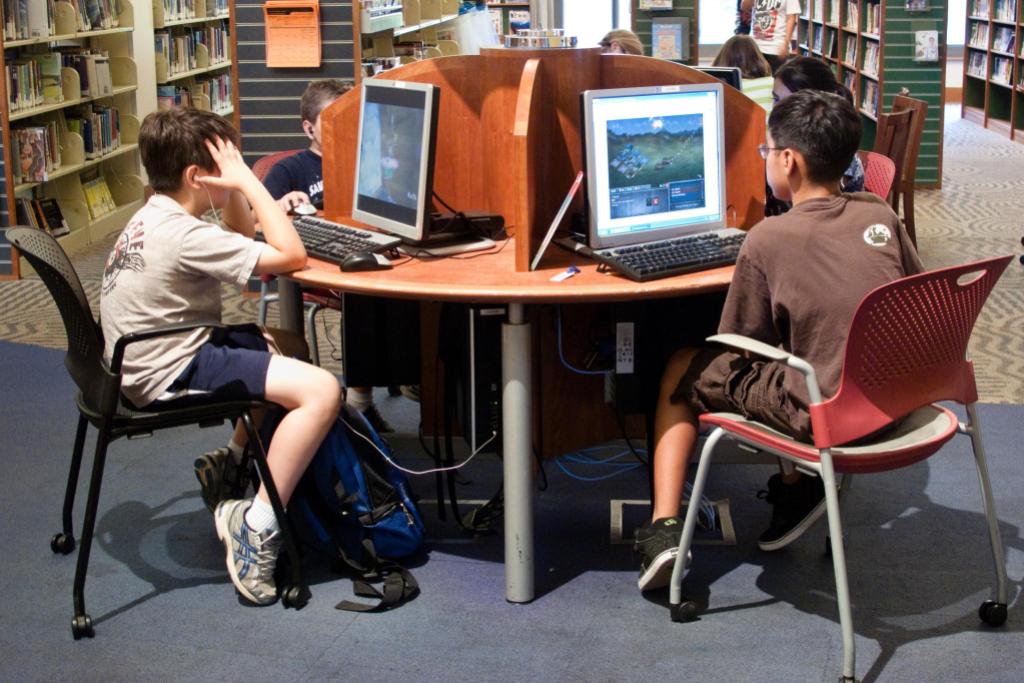

- Providing an informational webpage for younger volunteer editors. The aim of this work is to make sure that minors can continue to safely access and contribute to the Wikimedia projects, not to discourage them from doing so. With that in mind, we have also developed an internal protocol around minors who publish too much personal information about themselves.

How the policy was developed and implemented

This policy was developed alongside members of the Wikimedia community as well as industry and subject-matter experts through a partnership with the Trust and Safety Professionals Association (TSPA).

We spoke with community members about this issue as part of a broader outreach campaign related to the recent changes to the Wikimedia Terms of Use (ToU). During those calls we asked the various communities about what kind of role they wanted to see T&S have in implementing the protection of children on the projects. We consider that, generally, community governance is paramount, and autonomy is a very important tenet of the Wikimedia ecosystem. The Wikimedia communities have many policies already in place to protect younger users of the sites. For that reason, we focused on how best to supplement their work, and learned about the experiences and proposals of different communities to help guide the direction and development of the new policy.

An aspect of the engagement with the communities also involved sending out a survey asking about the current landscape on different language versions of the projects, and how administrators handled content that was flagged as potential CSAM.

We appreciate the Wikimedia communities’ support for practices that allow to keep everyone, including children, safe on the projects. We look forward to their continued feedback and contributions to the development of other policies in the future. In addition, so as to evaluate the impact of this new policy, we will review after a year of implementation. This will allow potential additions or changes to make sure it is as effective as possible.

Through the TSPA, we were able to discuss the importance and impact that the policy might have on the decentralized model of content moderation of the Wikimedia projects, which has made them trusted sources of knowledge worldwide. Wikimedia projects do not have thousands of paid moderators to remove and report such content. On the contrary, almost all content moderation actions on the projects are taken by volunteer contributors, whom the Foundation supports around issues that concern safety. Therefore, it is important that we have a robust set of policies and protocols for handling these incidents when they arise. Supporting the volunteer administrators by enhancing the policies to handle the most sensitive and challenging content is a responsibility we take seriously.

Now that the child protection policy is developed and implemented, it will regularly undergo review by the Legal Department along with the Terms of Use (ToU). Please know that we welcome any questions or comments regarding the new policy, and that you can reach out to the T&S team at ca@wikimedia.org. Together we can help better protect younger readers and volunteers throughout the Wikimedia projects, and make sure that free knowledge can be accessed and created safely.

Can you help us translate this article?

In order for this article to reach as many people as possible we would like your help. Can you translate this article to get the message out?

Start translation